NVIDIA Doesn’t Matter (for Driving Automation)

The full-autonomy frontier it can’t reach

As part of our interest in innovative mobility, Changing Lanes covers all aspects of driving automation. Today’s piece is on the technical and business side, and builds on my earlier work with Jannik Reigl on why the most capable AV companies own their full technology stacks. It is part of an informal series on the AI revolution and its implications for self-driving cars.

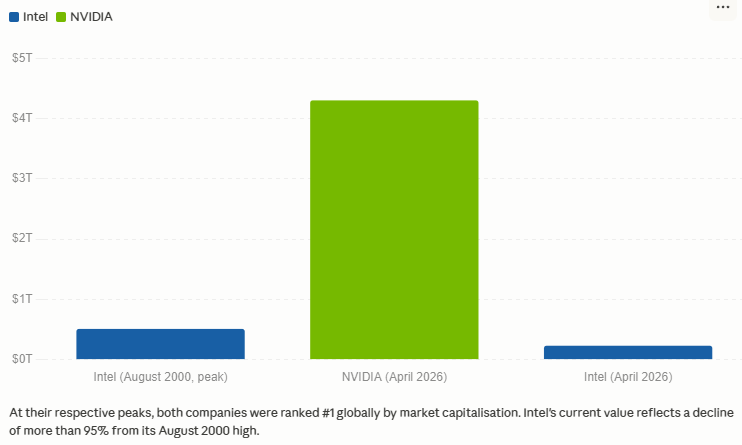

In August 2000, Intel was the most valuable company in the world, with a market capitalization of roughly $509 billion. It made that money by selling the chips inside almost every PC in the world. “Intel Inside” was both a marketing sticker and a description of the entire industry’s architecture: one company’s processor, running billions of machines, invisible to end users but indispensable to everyone who built for them. Intel’s dominance seemed self-reinforcing, because the more machines ran on Intel, the more software was optimized for Intel, and the harder it became for customers to leave.

But, gradually and then suddenly, the biggest customers left anyway. ARM captured mobile; Intel cancelled its Atom mobile programme after spending an estimated $10 billion in OEM subsidies to capture roughly 1% of the market, formally walking away in April 2016. Apple announced its move to custom ARM chips in June 2020 and completed the transition when it discontinued its last Intel Mac in June 2023. Amazon, Google, and Microsoft have all built their own ARM-based server chips; by 2025, more than half of all new CPU capacity added to AWS runs on Amazon’s own Graviton silicon. Intel’s market capitalization is less than half today what it was at peak.

In April 2026, the most valuable company in the world is NVIDIA. It has the same kind of dominance, which is the same kind of trap.

Chart courtesy of Claude

NVIDIA is so valuable because its core product, the GPU—originally designed to render video-game graphics—is exceptionally well-suited to training large AI models. That’s why NVIDIA, in our moment, is unavoidable: not just for AI, but also for AV.

To function, AVs need a robust and constantly-updating model of the world around them, which they gather from continuous streams of sensor data: cameras, lidar, radar, and more. They then need to fuse that data into a world model. Based on that model, they must make ongoing decisions, sometimes at a fraction of a second, to ensure the vehicle operates safely and appropriately. And they must do this reliably over millions of miles.

This is, or should be, an AI problem, and NVIDIA makes the hardware the AI industry runs on. It therefore seems reasonable to expect that NVIDIA is just as relevant to the future growth and profits of AV as it is to AI. Jensen Huang, NVIDIA’s CEO, certainly wants us to think that: he has described an AV as a robot that needs a brain, and claimed that NVIDIA is the natural supplier of that brain. And he’s got announcements to back his claims up. NVIDIA has partnered with Mercedes-Benz, Volvo, BYD, Jaguar Land Rover, and other manufacturers, and has disclosed automotive revenue of $2.3 billion in fiscal 2026.

It’s tempting to think that NVIDIA will be to the self-driving car what Intel was to the personal computer: the provider of the infrastructure inside everything, the platform the whole industry runs on.

I’m not so sure.

The analogy holds insofar as NVIDIA today doesn’t build its own AVs but instead provides the infrastructure for those of other firms. Relying on NVIDIA makes sense for companies that don’t own their full technology stack, but as Jannik Reigl and I have written, owning your own stack is a prerequisite for success, and the biggest companies know it.

The companies who have had the most success in this space are those who believe that their competitive advantage requires tight hardware-software co-design, and accordingly have all built their own silicon. This means that, despite its prominence in the AI sector generally, NVIDIA’s position in driving automation is specific: indispensable to the industry’s middle, unsuitable for companies that need to own the whole stack.

NVIDIA Was King of ADAS

The most important product in NVIDIA’s automotive business is a chip called DRIVE Orin.

DRIVE Orin is a ‘system-on-chip’, i.e., a single piece of silicon that combines a GPU, a dedicated AI inference accelerator, and an image signal processor, designed to run the trained neural networks that parse camera and sensor feeds in real time. Unlike a data-centre GPU, which is built to train models at scale, Orin is optimized for inference: fast, efficient, and cool enough to operate inside a car. Reliability is important here because automotive functional safety is governed by stringent standards, specifically ISO 26262, an international specification that classifies components on a scale from ASIL-A (lowest) to ASIL-D (highest). NVIDIA had DRIVE Orin certified to meet ASIL-D systematic requirements, the level required for systems that could cause serious injury if they fail.

Earning that certification is not straightforward. The process requires years of design documentation, formal hazard analysis, and a third-party audit. In the most detailed public case, the certifying body reported that the process took a supplier more than four years. The engineering overhead alone is estimated to add 30% to standard chip-development costs. General-purpose chipmakers see no reason to undertake this safety certification because none of their other markets demand it. But NVIDIA took it on and dug itself a meaningful moat in the process. A rival chip that matches Orin’s raw compute but lacks ASIL-D certification cannot be legally deployed in a production vehicle in most major markets. The certification is part of what NVIDIA is selling.

NVIDIA didn’t stop at the chip. It built ‘DRIVE Sim’, a simulation environment for training and testing autonomous systems before they touch a real road, and ‘DriveOS’, the software layer that manages how Orin’s hardware resources are allocated. It also published a reference architecture, called Hyperion, that showed Tier 1 automotive suppliers exactly how to build a production system around Orin. The effect was to reduce the work an automaker had to do itself. If an automobile is indeed a robot that needs a brain, NVIDIA sells not only the brain, but the robot’s DNA, the instruction manual for the whole body.

The standard measure of chip throughput is ‘tera operations per second’, or TOPS; i.e., how many operations the chip can perform per second, measured in trillions. At launch, Orin boasted 254 TOPS, easily outpacing its closest competitors. Mobileye, the Israeli chip designer, offered its EyeQ5, which delivered only around 17.6 TOPS, while San-Diego-based Qualcomm’s Snapdragon Ride product, targeting the same applications, had a mid-range SKU at approximately 100 TOPS.1

NVIDIA’s investment paid off. Between roughly 2022 and 2025, DRIVE Orin became the dominant AI chip for advanced driver assistance (ADAS) across the Chinese electric vehicle market. More than ten Chinese OEMs shipped consumer vehicles running Orin during this period. BYD, the world’s largest EV manufacturer by volume, equipped more than one million vehicles with its NVIDIA-powered “God’s Eye” driver-assistance system by mid-2025. Other Chinese automakers like NIO, XPeng, Li Auto, Zeekr, and Xiaomi were scaling from basic cruise control to ADAS fast at this time, and NVIDIA had what they needed: a production-ready chip with the right performance, the right safety credentials, and a complete development package that allowed them to make the transition right away rather than build their own chips, or simulation environments, or vehicle-chip integration systems.

NVIDIA had competition in the Chinese market from Mobileye and Qualcomm, but not serious competition: only NVIDIA could deliver so much quality at such a scale, which meant it could charge a significant premium. NIO CEO William Li stated on the firm’s Q3 2025 earnings call that switching to its own chip delivered “approximately 10,000 yuan ($1,420) in cost optimization per vehicle,” a figure that illustrates how much profit NVIDIA gets from Orin.

Undoubtedly, some of NVIDIA’s customers would prefer to take their business elsewhere, but doing so is difficult. Finding a new supplier would mean re-earning safety certifications from scratch, establishing new sets of relationships with parts manufacturers, replacing software toolchains, and retraining engineering teams. Bringing chip-making in-house incurs all these difficulties and more.

But it might be worse than the alternative. NIO VP Wang Qiyan put the underlying logic plainly: “Leaving our fate in the hands of suppliers is terrifying.” That’s why, despite the cost and challenge, NIO ultimately switched to an in-house chip, a move that required over 600 engineers and more than 140 million dollars, taking four years from project start to deployment (2021 to March 2025).

Whether the implications of NIO’s switch were good or bad for NVIDIA depends on your perspective. It shows that reliance on NVIDIA’s product (what an economist might call its installed base) has become a moat, and that crossing it to make one’s own chips requires immense time, expense, and inconvenience. But it also shows that if the economic case for leaving becomes strong enough, NVIDIA’s customers that can do so will power through to switch, as NIO did.

The Frontier Built Its Own Silicon

Some notable names are absent from NVIDIA’s customer list. Waymo isn’t there, nor are Tesla and Zoox; none of the companies most associated with the frontier of genuine driverless capability. That’s because none of these companies adopted NVIDIA’s automotive platform— not Orin, not DriveOS, not the Hyperion reference architecture—even as a transitional step.

To understand why, it helps to look at how the most capable AV systems are built.

In self-driving cars built this decade, what separates the frontier companies is not a better chip, but rather their co-design loop, in which sensors, compute, and software are developed together, each constraining and enabling the others. The chip shapes the models; the models shape the chip; the sensors constrain both. Designing all three together, each informing the others, learning quickly how to improve and deploying those improvements is the key to success in this domain. Co-design permits a faster, more power-efficient, and ultimately cheaper unit than anything assembled from off-the-shelf parts could be.

This is why Waymo builds everything from scratch.2 Waymo operates more than 3,000 robotaxis across ten American cities, completes 500,000 paid rides every week, and has logged more than 200 million fully-automated miles. Its sixth-generation system, which began fully autonomous operations in February 2026, uses custom chips designed entirely in-house. Waymo didn’t adopt NVIDIA’s automotive platform at any stage of its development. From the start (as far as outsiders can tell), the company concluded that the hardware-software design loop was too important to hand off to an outside supplier.

The same pattern holds across every company that has reached genuine scale in full autonomy. Tesla has designed its own AI chips since 2019, and before its robotaxi programme shut down, Cruise had a 750-person hardware team and began developing four custom chips. Its head of hardware, Carl Jenkins, told Reuters that NVIDIA’s pricing was simply unsustainable: “There is no negotiation because we’re tiny volume”.3[3]

There is one exception to the case, namely Aurora, the first company to operate a commercial driverless Class 8 trucking service. Aurora launched that service in April 2025. It operates on U.S. highways and had logged more than 250,000 driverless miles by January 2026. And it uses NVIDIA chips.

Aurora’s reliance on NVIDIA may seem to suggest that NVIDIA will be a major player in frontier AV after all, but the conclusion doesn’t follow. Waymo’s system is the product of a tightly-integrated sensor suite—13 cameras, four lidar units, six radar units, all custom-designed and built in California—combined with a custom compute stack and proprietary software developed together from the start; its performance depends on that integration at every layer, which is why controlling the hardware matters. Tesla and Zoox are going even further, building their own vehicles and integrating their sensor suites into them. Aurora’s strategy is the opposite: it aims to build a strong standalone driving-automation suite, the Aurora Driver, and deploy it across OEM platforms it doesn’t control and cannot customize. This means that for Aurora, NVIDIA’s off-the-shelf, safety-certified hardware is the cheapest and best solution to their needs.

That structural logic makes Aurora a different kind of test case: not whether NVIDIA’s platform survives frontier defection—Aurora does not, at present, have motive to defect—but whether NVIDIA can support a full-autonomy deployment at production scale without triggering the cost pressures that will drive Aurora toward custom silicon, as it did to NIO and Cruise. The 2027 deployment will begin to answer that.

At this point, one may wonder whether this is the AV equivalent of Apple vs. Android: a premium integrated tier and a larger “good enough” platform, both of which can coexist indefinitely. I think it’s unlikely to be the case. The mobile-phone sector sustained that bifurcation because each offered its own standardized platform on which others could sell software. In driving automation, the competitive advantage is the hardware-software stack itself. There is no equivalent of a ‘better app’ that runs on general-purpose compute and outperforms tight co-design.

So NVIDIA doesn’t have Waymo nor Tesla, but they do have Aurora, and more besides. It’s tempting to think that NVIDIA doesn’t need Waymo or Tesla, nor any American self-driving firm, given that NVIDIA’s strength in driving automation is in supplying the Chinese ADAS market, which is a strong foundation.

But that foundation is eroding.

As I mentioned, NIO has migrated its main vehicle lineup to an in-house chip. Meanwhile, XPeng’s in-house Turing chip claims roughly three times the processing capacity of a single Orin unit. Horizon Robotics, a Chinese startup competing directly with NVIDIA in the automotive AI chip market, now commands approximately 50% of the Chinese domestic driver-assistance chip market by volume. The same pressures that push full-autonomy companies toward custom hardware—managing costs, controlling their own supply chains, and the painful inability to specify power consumption or performance—are now pushing on the Chinese manufacturers that have gotten big enough to go it alone, as NIO did. (It certainly doesn’t help that in September 2025, the Chinese government issued an advisory explicitly discouraging Chinese OEMs from purchasing NVIDIA chips, but that directive seems less like a sudden break and more like pushing on an open door.)

Outside China, NVIDIA’s position looks intact for now. Aurora has committed to the company’s next-generation chip, DRIVE Thor, which delivers roughly four times the processing capacity of a single Orin unit, with a 2027 deployment target. DRIVE Thor has more than 15 announced adopters across passenger vehicles, trucks, and robotaxis, and its product timeline appears to be proceeding normally. So NVIDIA seems safe, for so long as no Western OEM reaches the volume threshold that would make going in-house compelling.

Intel’s Present Is NVIDIA’s Future

NVIDIA is a supplier of silicon infrastructure to companies that don’t need to own the whole stack. Most of the companies building driver-assistance systems today are not Waymo or Tesla; they lack the engineering depth, the production volume, and the competitive logic that would make custom chips worth building. For them, NVIDIA’s complete package is the rational choice. And NVIDIA’s contribution is real: it enabled an entire category of companies to deploy sophisticated driver-assistance systems faster than they could have independently. That enables safer driving technology to reach ordinary drivers more quickly, and it deserves credit for it.

What NVIDIA hasn’t managed, and what the evidence suggests it structurally can’t, is to become the foundational platform for the companies at the frontier of full autonomy. The firms defining what genuinely driverless vehicles will look like have all concluded that owning their own compute is non-negotiable.

Aurora’s planned 2027 deployment on DRIVE Thor is the clearest near-term test of whether that conclusion has exceptions: perhaps Aurora will deliver commercial Level 4 freight autonomy at production scale on NVIDIA hardware and report that the cost and capabilities hold up as volumes grow. If we saw that, and/or a major Western OEM reversing course on a planned in-house chip programme and committing to Thor at volume (absent geopolitical pressure), I’d update my views. The first outcome would suggest that NVIDIA’s ecosystem can underpin full autonomy rather than just ADAS; the second would suggest the ecosystem moat is deeper than current evidence suggests.

But absent those developments, it’s fair to say that NVIDIA built the tools that let most of the industry move faster than it could have on its own. However, its fastest-growing customers are leaving it behind, and the firms that are furthest ahead never used it in the first place.

Intel’s story didn’t end with its displacement. It remains a large and profitable business, selling chips into markets that don’t require custom silicon. NVIDIA’s story in driving automation may follow a similar arc: indispensable to the many, bypassed by the few who get large enough to need something built precisely for them. The parallel is to Intel-in-2018, not Intel-in-2024: the frontier defections have started, but the displacement is not yet irreversible.4 Whether NVIDIA escapes the trap is the question the next hardware generation will begin to answer.

The same dynamic that overtook Intel—frontier defection, followed by volume compounding among customers that reached the scale threshold—is already visible in NVIDIA’s automotive business. Whether it plays out over five years or fifteen depends on how quickly the Western ADAS market matures, but I think the direction is not in question.

Respect to Rob L'Heureux, Mike Riggs and Rhishi Pethe for comments on earlier drafts.

I reckon 17.6 TOPS for the Mobileye EyeQ5, given that Mobileye itself says that a successor chip, the EyeQ Ultra, had 176 TOPS and was as powerful as ten EyeQ5 chips combined.

Let me concede that Mobileye might object to my characterization of the firm as being “easily outpaced” by NVIDIA, given that its chips are running in more than 200 million vehicles vehicles globally. They might argue that they aren’t competing with NVIDIA so much as offering a distinct value proposition: lower compute ceiling, but also lower cost and better power efficiency.

Okay, maybe not everything. Waymo almost certainly uses generic NVIDIA GPUs to train its AI models, as does virtually every AI company operating at scale. But it doesn’t deploy NVIDIA DRIVE chips to its vehicles, nor use the Omniverse simulation environment or the DriveOS software stack.

Sadly Cruise went defunct before its chips could be finalized.

One way the analogy breaks down is that Intel had no adjacent business to insulate itself when its core customers defected. NVIDIA’s AV R&D is effectively cross-subsidized by the AI training market it dominates, a structural buffer Intel never had.